The new Ceph container demo is super dope!

I have been recently working on refactoring our Ceph container images.

We used to have two separate images for daemon and demo.

Recently, for Luminous, I decided to merge the demo container into daemon.

It makes everything easier, code is in a single place, we only have a single image to test with the CI and users have a single image to play with.

As reminder, this is what the container can do for you:

- Bootstrap a single Ceph monitor

- Bootstrap a single Ceph OSD with Bluestore (running on a filesystem)

- Bootstrap a single MDS server

- Bootstrap a RGW instance with optionally a user and the ability to interact with

s3cmd. - Bootstrap a rbd-mirror daemon

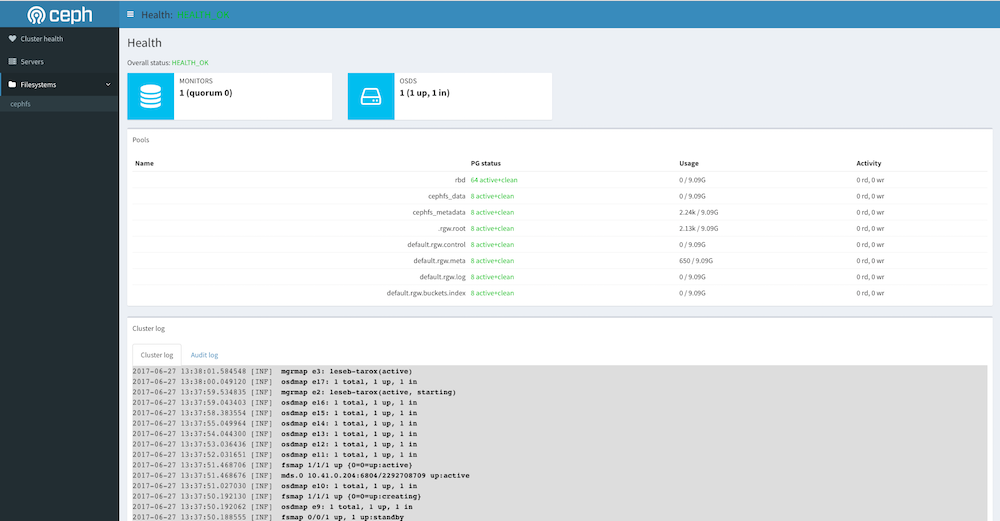

- Bootstrap a ceph-mgr daemon with its dashboard

This is how to run it:

docker run -d \ |

Obviously adapt both MON_IP and CEPH_PUBLIC_NETWORK with your host specificity.

It’s handy to bindmount both /var/lib/ceph and /etc/ceph so the container can survive a restart.

Output example on my test system:

$ sudo ceph -s |

Obviously, this container enables the new dashboard manager:

Enjoy this nice preview on Luminous, the current image from the Docker Hub is build on the first Luminous RC.

Comments